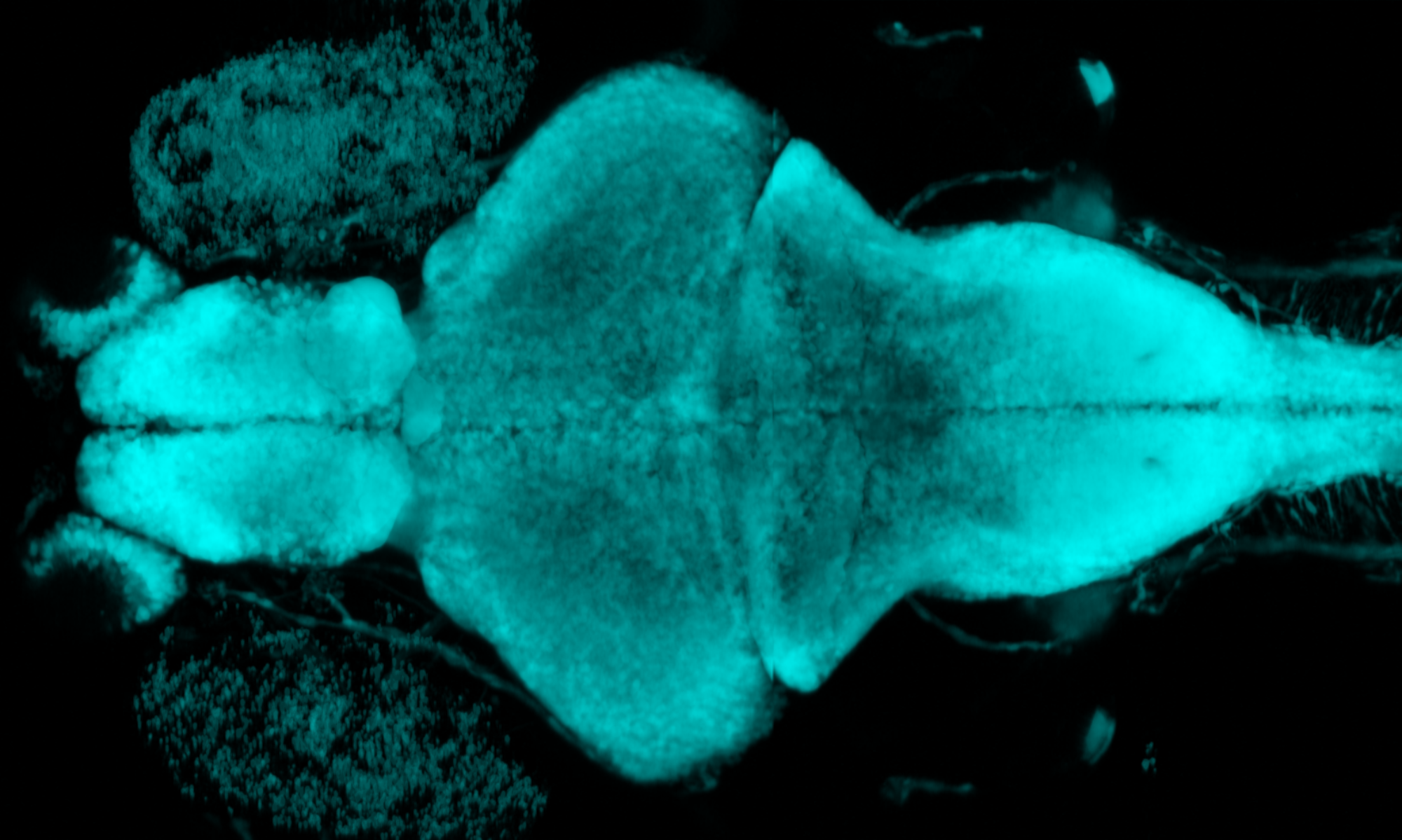

How is information about the external world encoded in sensory organs and subsequently represented by neural activity in the brain? How does the brain use this information in order to make meaningful decisions and generate goal-oriented actions? Research in our group aims to elucidate the mechanisms whereby the vertebrate nervous system processes sensory information and integrates it with internally generated information about ongoing motor activity at the level of synapses, cells and circuits. A main goal is to identify the neural circuits that underlie conserved visually guided behaviors, such as perceptual decision making and the sensory control of target-directed motor sequences. In order to understand how circuit function emerges from synaptic connectivity, we use quantitative analysis of visually guided behaviors, fluorescence-based imaging of neural activity from populations of neurons and synapses, and recordings from single, genetically identified neurons in the zebrafish visual system.

Internal representations of self-motion in the brain

Expecting the sensory consequences of one’s own actions: corollary discharge

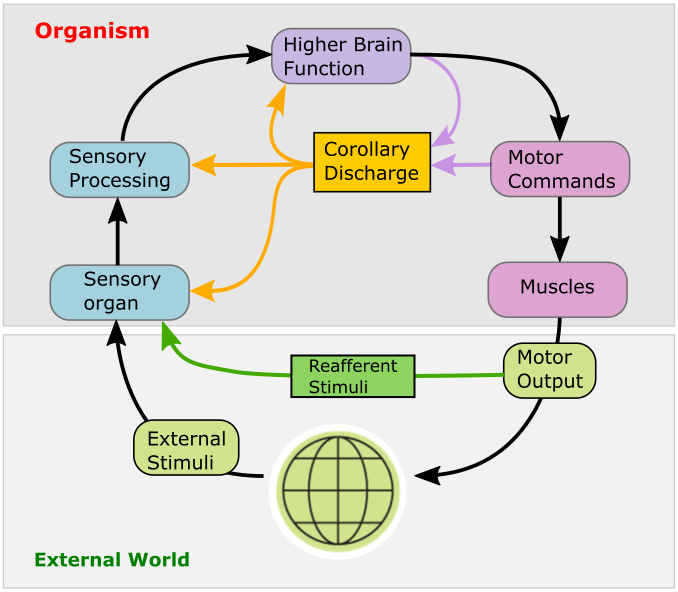

As an animal moves, its sensory organs receive a multitude of sensory inputs that may in principle originate from two different sources: objects in the external world, and the animal’s own motion. To distinguish between the two, the brain uses internally generated signals termed corollary discharge (CD) that encode an animals own movements and the expected sensory consequences thereof. CD signals are essential for the brain to construct internal representations of self-generated motion; higher brain functions critically depend on them. For instance, CD signals form the basis for generating sensory prediction errors that encode possible mismatches between expected and actual sensory feedback. The brain can then use these to fine-tune future motor commands: a prerequisite for motor learning. Likewise, CD is essential in generating internal representations of recent locomotor history, which is a prerequisite for navigation and orienting in the absence of external sensory input. Moreover, corollary discharge is thought to be critical for maintaining visual stability and perceptual continuity in the human brain during self-generated movements, and its disruption may create misrepresentations of reality in the brain.

A neural correlate of saccadic suppression

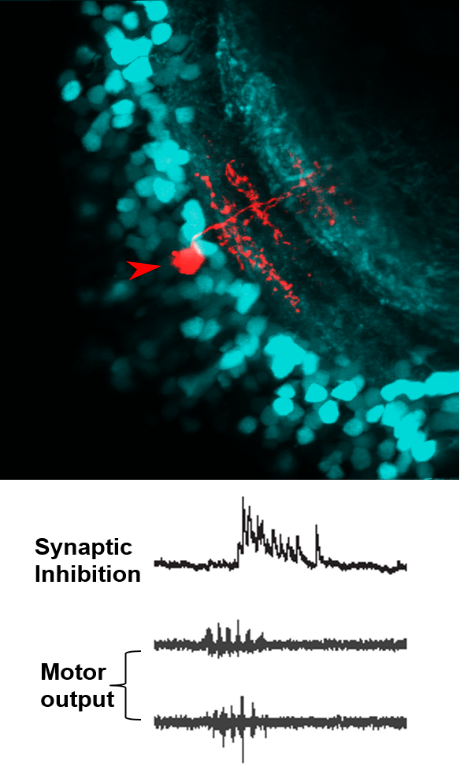

In recent work, we found clear evidence for a corollary discharge mechanism in the main visual processing center by which the processing of visual information and its transmission to downstream motor areas is modulated at the time of saccade-like self-motion. Using whole-cell patch clamp recordings from single neurons in the tectum of zebrafish larvae, we identified an inhibitory synaptic signal, temporally locked to spontaneous and visually driven swim bouts. This motor-related inhibition was appropriately timed to counteract visually driven excitatory input arising from the fish’s own motion, and transiently suppressed tectal spiking activity. Using high-resolution calcium imaging, we could show that localized motor-related signals occur in the superficial layers of the tectal neuropil and the adjacent torus longitudinalis, suggesting that corollary discharge signals enters the tectum via this pathway. Together, these findings show how visual processing is suppressed during self-motion by motor-related phasic inhibition. This may help explain the perceptual phenomenon of saccadic suppression observed in many species, which refers to the observation that our perceptual sensitivity is attenuated during fast saccadic eye movements.

Visual control of goal-directed motor sequences

Reconstructing motor sequences in virtual reality

When hunting prey, zebrafish larvae perform rapid, target-directed sequences of swim maneuvers, controlled by the visual system. To better understand the relation between vision and action during this complex behavior, it is important to obtain a detailed picture of what the fish sees when freely swimming and hunting small paramecia, its natural prey. From high-speed recordings of freely swimming larvae, we quantified the size and speed of prey from the larva’s perspective and analyzed its eye and tail movements to obtain a detailed description of the stimulus-response-relationship. This way we found that prey capture sequences are composed of versions of a basic motor pattern, which are superimposed with a graded turning component that allows the larva to adjust its heading direction on a fine, continuous scale, similar to how humans perform fast eye and head movements directed towards a visual target on a graded scale.

Subsequently, we developed closed-loop visual stimulation methods, which allow us to elicit multi-step prey capture sequences in larvae embedded in a virtual environment. This allows us to test the role of visual feedback in sequenced motor behaviors. For example, we observed that small spatio-temporal shifts in visual feedback after a swim bout result in significantly longer reaction times. We hypothesize that visual feedback-driven activity interacts with corollary discharge signals and residual activity from previous motor steps, which could lead to accelerated programming of subsequent motor commands. We combine these advanced stimulation techniques with two-photon Ca2+ imaging and patch-clamp recordings in order to investigate the spatio-temporal patterns of activity underlying these visually driven motor sequences.

Neural circuits in vision, perceptual decision making and motor control

Neural circuits mediating behavioral choice

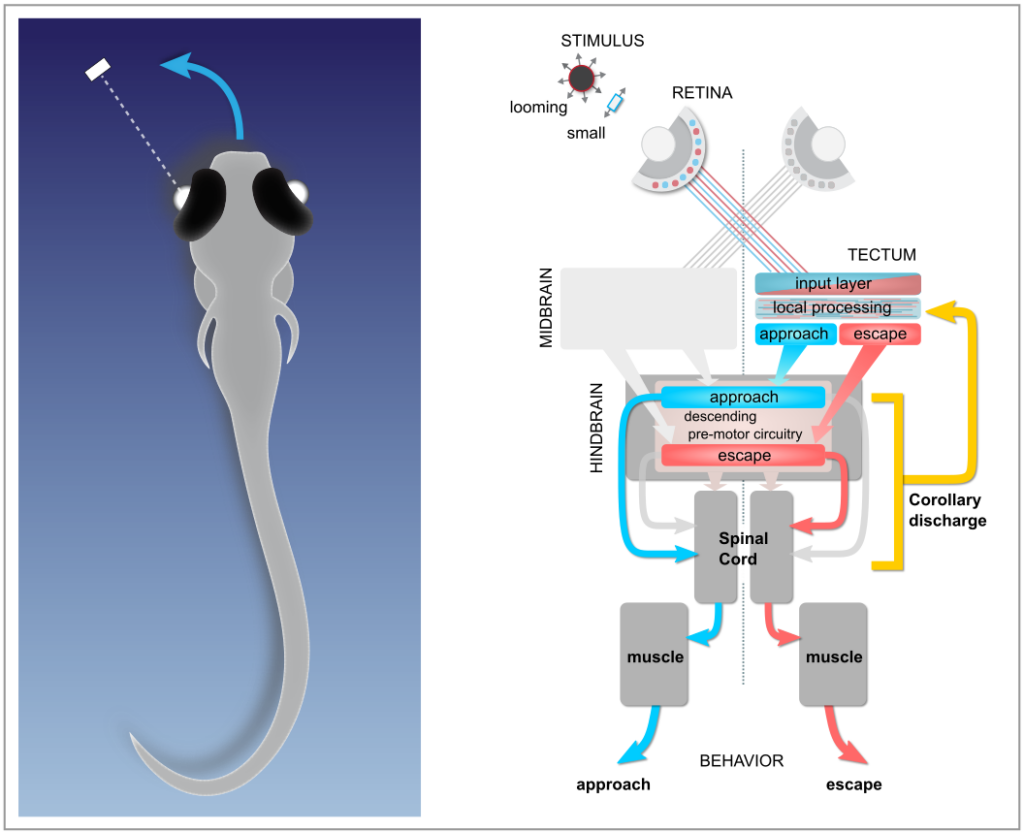

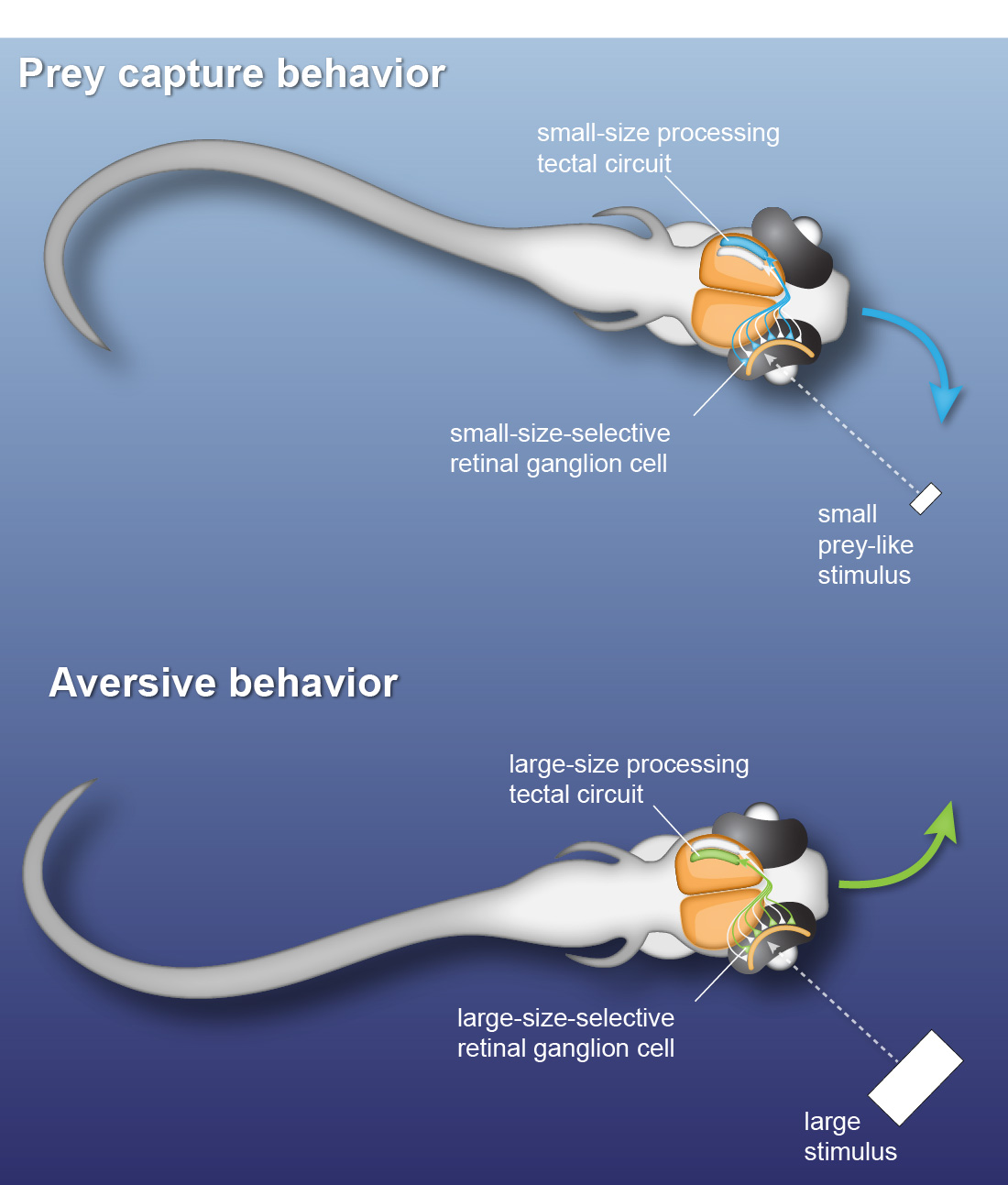

Many daylight-active animals make fast behavioral decisions based on specific visual features of external stimuli. Zebrafish larvae already exhibit such behavioral choice: they perform orienting swims towards a prey-like target or away from a potentially threatening object depending on the size of the visual stimulus. Using kinematic analysis of swimming behavior, multi-photon calcium imaging and single-cell electrophysiology, we find that object size is classified in the activity of different sets of neurons throughout the retinotectal system (Preuss et al., 2014).

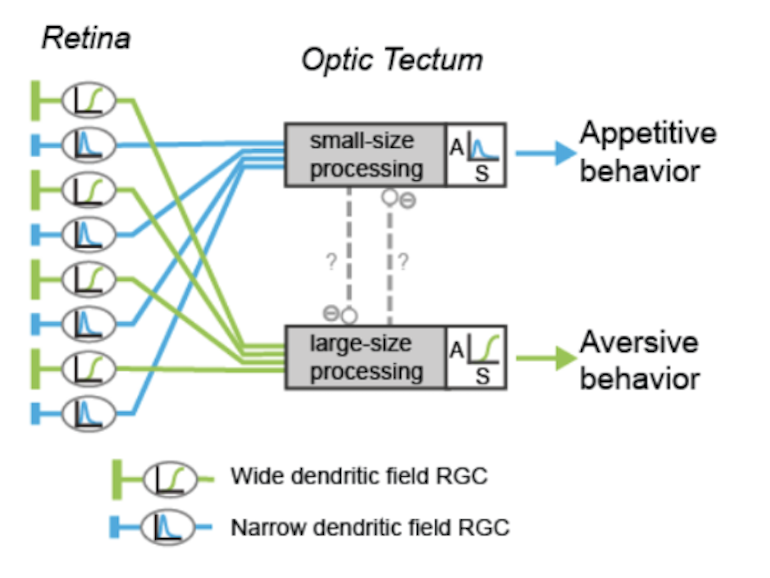

To identify neural circuits involved in controlling such behavioral decisions, we use visual stimuli that mimic salient visual features of prey-like targets or aversive moving objects. In response to these stimuli, we observe that object size is encoded in the activity of different retinal ganglion cell types projecting to the optic tectum. Then, by recording synaptic currents from individual neurons, we find that a class of horizontal cells called superficial interneurons (SINs) exhibit distinct, size-dependent synaptic inputs, depending on the tectal layer in which their dendrites arborize. SINs fall into different response types, with a distinct class of SINs tuned to small, prey-like stimuli. These SINs arborize in the most superficial neuropil, where retinal ganglion cells are predominantly tuned to small moving objects. At the next processing stage, tectal cells in the periventricular zone exhibit differential size selectivities, with many cells tuned either to small or large moving objects. We conclude that behaviorally relevant size classification begins in the retina, and that parallel, size-selective channels activate distinct, size-processing subnetworks in the tectum. These distinct subnetworks may represent a bifurcation point for behavioral decision making during visually controlled motor behavior.

Neural circuits processing visual motion

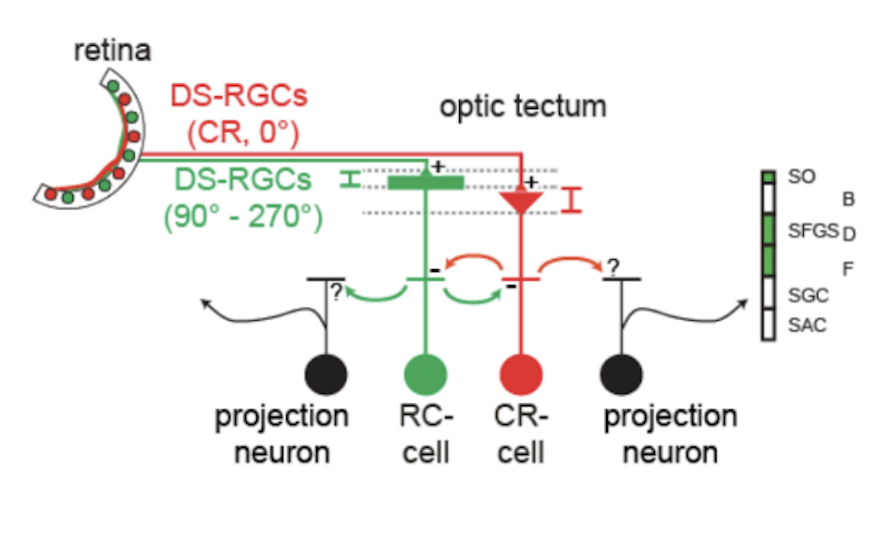

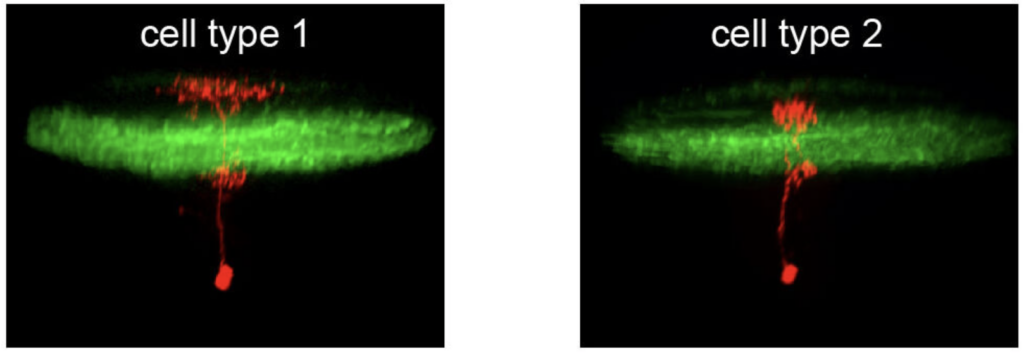

Neurons sensitive to the direction of motion have been observed at all levels of the visual system, but the underlying mechanisms remain controversial. A critical question is whether direction selectivity is coded in retinal ganglion cell activity and relayed to downstream targets, or whether direction selectivity emerges de novo in higher visual circuits from basic, topographically organized input maps. Using multi-photon Ca2+ imaging and single-cell electrophysiology, we find that neurons in the optic tectum with preference for opposing directions are morphologically distinct and exhibit dendritic arborizations in different layers of the tectal neuropil. The dendritic profiles colocalize with presynaptic retinal ganglion cell terminals in sublayers with corresponding directional tuning. Overall, the results demonstrate a remarkable correspondence between the functional and structural profile of morphologically distinct cell types, which indicates that the central principle of layer-specific feature extraction applies not only to the retina but also to higher visual centers.

Neural circuits controlling motor output

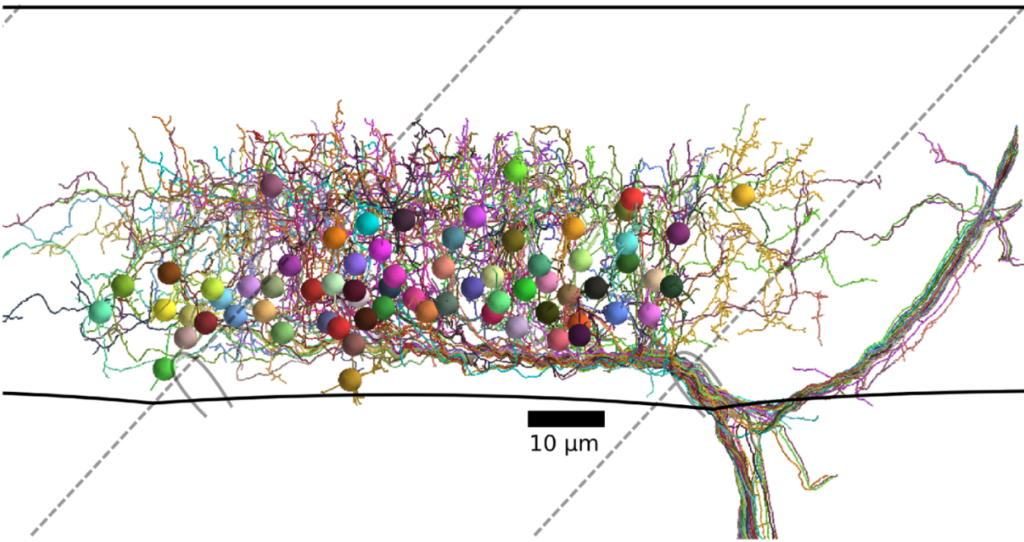

Modern volume electron microscopy enables the imaging of extended brain regions or even entire brains from small animal models in three dimensions at a resolution of a few nanometers. This data is used to anatomically map all synaptic connections contained in such volumes, from which neural circuit motifs and rules of synaptic connectivity can be inferred. In pioneering work (with W. Denk, MPI Martinsried), we used an image stack of zebrafish spinal cord to analyze the connectivity between motor neurons and the interneurons that drive them. From this, we could identify circuit motifs important for the control of motor output, which helps in guiding future experiments to investigate their functional roles.

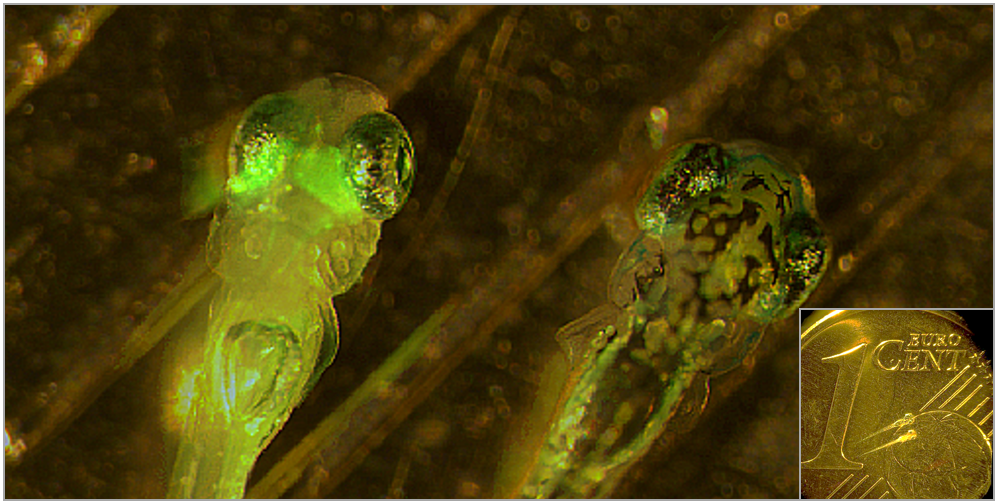

Two zebrafish larvae. Left larva expresses green fluorescent protein (GFP) in the visual system. Inset: two zebrafish larvae, with Eurocent coin for size comparison.